Loading...

TL;DR:

- % of students now use AI tools in their academic work, improving productivity and publication rates.

- AI enhances research by automating tasks like literature reviews, drafting, and editing, but requires human verification for accuracy.

- Responsible AI use involves understanding its limitations, avoiding bias, and maintaining critical thinking skills.

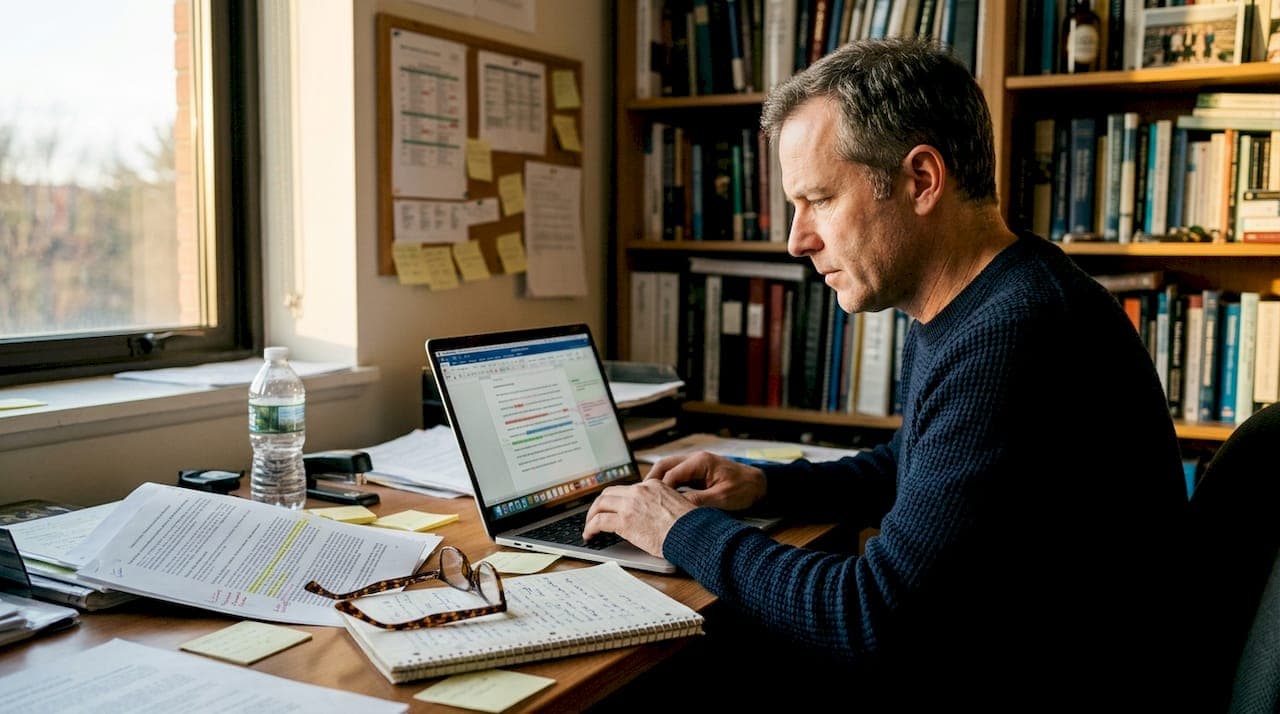

A striking 92% of students now report using AI tools in their academic work, a number that would have seemed impossible just five years ago. The shift is not just about convenience. Researchers who adopt AI are publishing more, getting cited more often, and moving faster through their academic careers. But the story is not all upside. Understanding what AI genuinely offers, where it struggles, and how to use it responsibly is what separates researchers who benefit from those who get burned. This guide breaks all of that down so you can make smarter decisions about AI in your own work.

| Point | Details |

|---|---|

| AI accelerates research | Most academic tasks like drafting and data analysis are faster and more productive with AI. |

| Choose tools carefully | Select AI models trained for academic tasks and always check their benchmarks and user ratings. |

| Critical review required | Human oversight is essential to catch errors, avoid bias, and ensure originality in AI-assisted writing. |

| Ethical use matters | Stay mindful of privacy, academic honesty, and interdisciplinary engagement when using AI. |

| AI is an assistant | Leverage AI for routine tasks while keeping your own analysis and creativity at the center. |

The case for AI in academia is not built on hype. It is built on measurable outcomes. Automation in research now covers tasks that used to eat entire weeks: literature searches, data analysis, experiment design support, and manuscript drafting. What once took a graduate student three days can now take three hours.

For writing specifically, AI tools assist by generating first drafts, summarizing long documents, improving grammar, explaining complex concepts, and suggesting new research directions. These are not minor conveniences. They are the difference between staring at a blank page and having a structured draft ready to refine.

Here is a quick look at what AI handles well versus where it still needs human support:

| Task | AI strength | Human input needed |

|---|---|---|

| Literature review | High | Moderate (verification) |

| Grammar and style | Very high | Low |

| First draft generation | High | High (refinement) |

| Experiment design | Low | Very high |

| Citation management | Moderate | High |

| Data interpretation | Moderate | High |

The pattern is clear. AI writing tools for students shine brightest on structured, repeatable tasks. Open-ended creative or analytical work still needs you.

Key benefits of AI for academic work:

Pro Tip: Use AI to generate a rough outline before you write a single word. This forces you to confront gaps in your argument early, saving hours of rewriting later.

The AI for productivity gains are real, but they scale with how deliberately you use the tools. Passive use gets modest results. Strategic use changes your output entirely.

Not all AI tools are equal, and knowing why helps you pick the right one for your research. Modern AI writing tools are built on large language models (LLMs), which are systems trained on massive text datasets to predict and generate coherent language. The bigger the model, the more general its knowledge. But bigger does not always mean better for academic work.

Research shows that domain-trained AI models can outperform much larger general-purpose models on research-specific tasks. A smaller model trained on scientific literature will often produce more accurate, relevant outputs than a giant model trained on everything from social media posts to legal contracts. This is a counterintuitive but important insight for researchers choosing tools.

For evaluating AI tools, three benchmarks come up most often in the literature:

Systems like PaperOrchestrator, which uses multi-agent LLM coordination, have achieved SOTA on academic paper writing benchmarks, meaning they outperform previous best results on standardized tests for research writing quality.

"The best AI tool for your research is not the most famous one. It is the one trained closest to your field."

Here is a comparison of AI model types relevant to academic writing:

| Model type | Best for | Limitation |

|---|---|---|

| General LLM (e.g., GPT-4) | Broad writing, grammar | Less precise on niche topics |

| Domain-trained LLM | Field-specific research tasks | Narrower general utility |

| Multi-agent systems | Full paper drafting | Requires more setup |

| Fine-tuned summarizers | Literature review | Limited to summarization |

Understanding AI and academic performance also means knowing that LLMs can hallucinate, meaning they sometimes generate plausible-sounding but factually wrong information. This is not a flaw to dismiss. It is a core limitation that demands human verification at every stage.

Knowing that AI works is one thing. Knowing exactly where to plug it into your process is what creates real results. Here is a practical workflow for integrating AI across the stages of academic writing and research:

The productivity numbers behind this approach are striking. Researchers using AI tools publish 3.02x more papers, earn 4.84x more citations, and lead independent projects 1.37 years earlier than peers who do not use these tools.

Stat to know: Researchers using AI publish over 3x more papers and earn nearly 5x more citations on average.

For AI for paper quality, multi-agent systems like PaperOrchestrator results show that coordinating multiple AI agents across different writing tasks produces more complete, coherent papers than using a single AI tool for everything.

Pro Tip: Never submit AI-generated content without reading every sentence yourself. AI drafts are starting points. Your critical analysis is what makes the work genuinely yours.

The opportunities are real, but so are the risks. Using AI in academic work without understanding its limitations can damage your research quality, your reputation, and potentially your academic standing.

The most well-documented risks include:

These challenges in AI research are not theoretical. They are showing up in retracted papers, failed dissertations, and disciplinary hearings at universities worldwide.

There is also a broader structural concern. AI use can reduce interdisciplinary engagement by 22% and contract collective scientific focus by 4.63%, creating a risk of scientific monoculture where research converges on the same questions, methods, and conclusions.

"AI narrows the field of vision when researchers stop asking questions the model was not trained to answer."

Understanding AI ethics in education means recognizing that the tool is only as responsible as the researcher using it. Universities and journals are actively developing new policies, and staying informed about your institution's guidelines is not optional.

Human oversight is not just good practice. It is the difference between research that advances knowledge and research that recycles it.

Here is the perspective that most AI enthusiasm glosses over: AI is genuinely transformative for academic work, but only when you stay in the driver's seat. The researchers seeing the biggest gains are not the ones handing everything to AI. They are the ones using AI to handle the mechanical load so they can focus their energy on the thinking that actually matters.

AI excels at synthesis and organization. It does not excel at the kind of lateral thinking that produces original research questions or the judgment required to evaluate conflicting evidence. Those skills are yours, and they are what make your work worth reading.

The practical approach is to verify outputs rigorously at every stage, treat AI drafts as raw material, and never let the tool make interpretive decisions on your behalf. The AI essay writing guide framework that works best is simple: AI handles the structure, you supply the substance.

Future-ready researchers will not be the ones who use AI the most. They will be the ones who use it the most deliberately, combining its speed with their own intellectual honesty and originality.

You now have a clear picture of what AI can and cannot do for your academic work. The next step is putting the right tools in your hands.

Samwell.ai is built specifically for students and researchers who want AI support without sacrificing academic integrity. The enhanced essay creator handles outlining, drafting, and editing with built-in citation formatting and real-time AI detection checks. Every feature is designed to keep your work original and credible. Over 1,000,000 students and academics already use the Samwell.ai platform to write faster and publish better. Try it on your next paper and see the difference structured AI support makes.

AI can help identify and rephrase similar content, but human verification is essential to ensure genuine originality and proper attribution in your work.

AI tools assist most effectively with literature review, summarizing texts, drafting, and grammar checking, while experiment design gains remain limited and require significant human expertise.

Yes. AI brings risks including factual hallucinations, algorithmic bias, data privacy concerns, and a measurable decline in critical thinking when overused without human oversight.

Always cross-check every factual claim independently, confirm citations against original sources, and treat AI-generated outputs as drafts that require thorough human review before submission.

AI use can reduce interdisciplinary engagement by 22%, so researchers need to make conscious efforts to connect with diverse fields and avoid narrowing their academic focus.