Loading...

TL;DR:

- AI is now seen as an assistive tool, not a replacement for students' own thinking.

- Detection tools are unreliable, with high false positives and negatives, making policy enforcement challenging.

- Responsible AI use involves transparency, verification, and avoiding full content generation to protect academic integrity.

Most students assume AI either writes their paper for them or gets them caught. Neither is the full story. The reality is more nuanced: LLMs generate academic text with high semantic similarity but also high AI-detection rates and poor readability scores, which means blindly trusting AI output is a losing strategy. What actually works is understanding where AI helps, where it hurts, and how to stay on the right side of your institution's policies. This article walks you through the ethical use cases, the real limits of AI detection, and the practical habits that protect your academic record.

| Point | Details |

|---|---|

| AI boosts efficiency | When used correctly, AI can drastically speed up brainstorming, drafts, and editing. |

| Disclosure is essential | Always disclose your AI use to align with institutional policies and avoid ethical breaches. |

| Detection tools have limits | AI detectors aren’t fully reliable; combine them with human oversight whenever possible. |

| Critical thinking still matters | Rely on AI for support, but maintain your originality, analysis, and voice. |

The conversation around AI in academia has shifted fast. A year ago, most institutions were scrambling to ban it outright. Now, the dominant view among researchers and policy makers is that AI is an assistive tool, not an author, and the rules are being written around that distinction.

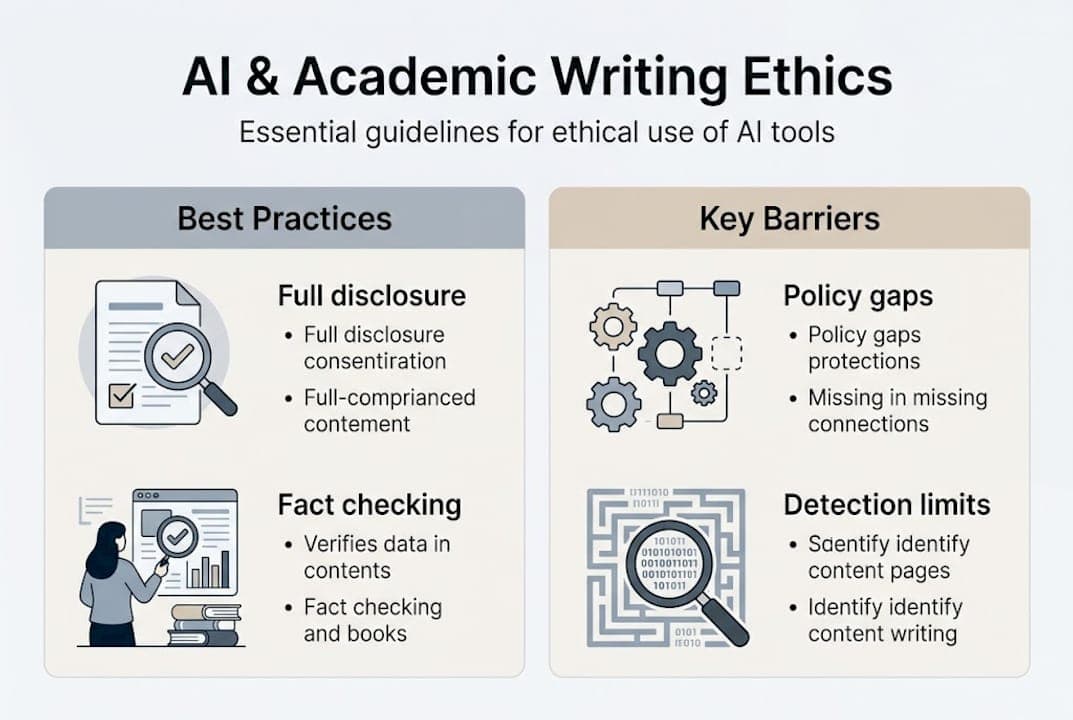

The core ethical principles guiding AI use in scholarly writing today include human accountability, mandatory disclosure of AI use, and a firm boundary: AI supports your thinking, it does not replace it. That means you remain responsible for every claim, every argument, and every citation in your work, regardless of what a tool suggested.

Universities are not all on the same page, but the trend is clear. University policies now emphasize transparency, prohibit substantive content generation without disclosure, and some permit limited use with formal acknowledgment. Here is how several institutional approaches compare:

| Institution type | AI use permitted | Disclosure required | Authorship stance |

|---|---|---|---|

| Research universities | Limited, task-specific | Yes, always | Human only |

| Liberal arts colleges | Case-by-case | Yes, per assignment | Human only |

| Online institutions | Often broader | Yes, platform-logged | Human primary |

| Graduate programs | Restricted | Mandatory in thesis | Human only |

The key ethical guidelines to follow in 2026 include:

"AI should enhance the human writer's capabilities, not substitute for them. Oversight, verification, and transparency are non-negotiable."

Understanding these boundaries is the foundation. Once you know what is allowed, you can start using AI in ways that genuinely improve your work. For a broader look at how these norms are evolving, the AI trends in academic integrity landscape is shifting quickly, and staying current matters. You can also get a solid grounding in the basics through this AI writing overview.

Now that you know where the lines are, let's talk about what you can actually do. The good news is that there is a meaningful range of tasks where AI adds real value without crossing ethical boundaries.

Ethical AI assistance covers brainstorming, literature reviews, outlining, grammar and style improvements, and citation formatting, but only when you verify outputs and avoid overreliance. That last part is not a footnote. It is the whole point.

Here is a step-by-step approach to integrating AI ethically into your writing process:

Pro Tip: Every time AI suggests a source or a fact, go find the original document yourself. AI hallucinations (fabricated citations) are common, and submitting a fake reference is an academic integrity violation, even if you did not know it was fake.

Knowing what to avoid is just as important. Do not use AI to generate full paragraphs, conclusions, or literature review summaries that you paste directly into your paper. Even with disclosure, many institutions treat this as a violation of authorship standards. For more on boosting writing efficiency without cutting corners, and a roundup of the best AI writing tools for students, those resources go deeper on approved workflows.

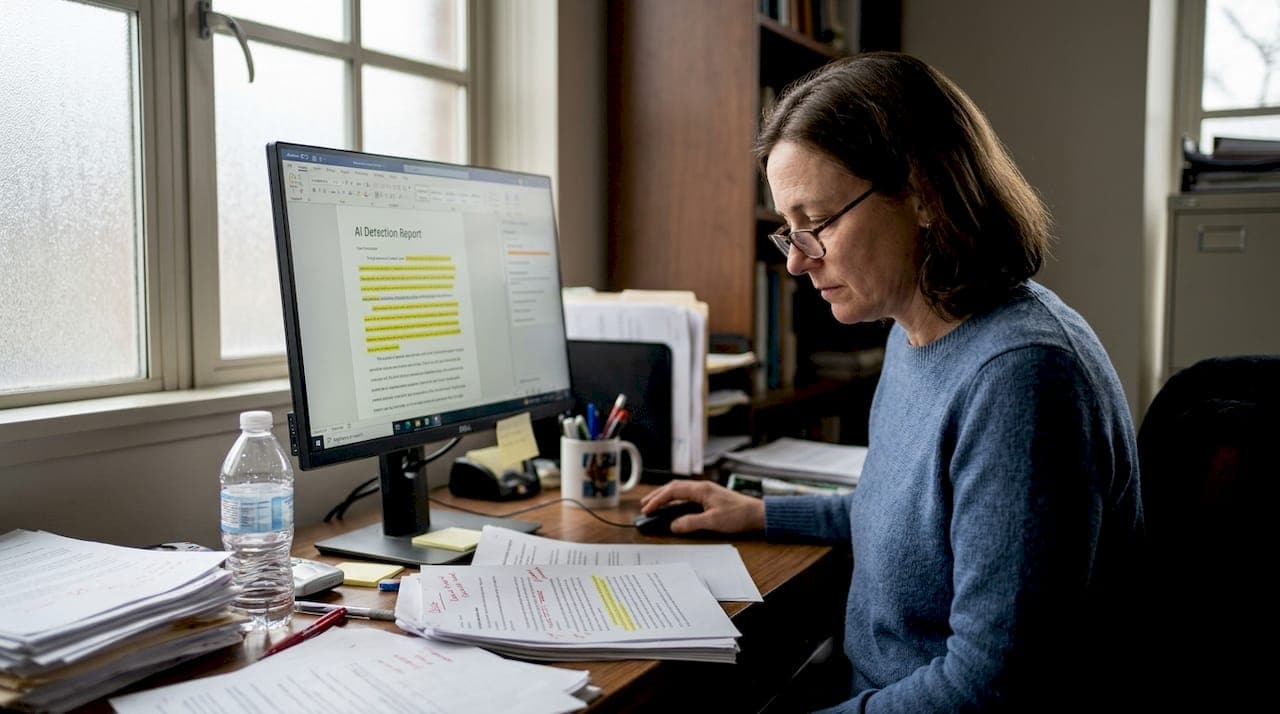

Here is where things get uncomfortable for both students and faculty. Many institutions are relying on AI detection tools to enforce their policies, but the data on those tools is not reassuring.

Research on AI detector accuracy shows Turnitin reaching only 61% accuracy and Originality.ai hitting 69%, with both performing poorly on hybrid texts, where a student writes most of the content and uses AI for editing or restructuring. On hybrid texts, recall rates drop to 0.31 for Turnitin and as low as 0.02 for Originality.ai. Detection also degrades as text length increases, and there is documented bias against writers whose first language is not English.

| Detector | Overall accuracy | Hybrid text recall | EFL bias documented |

|---|---|---|---|

| Turnitin | 61% | 0.31 | Yes |

| Originality.ai | 69% | 0.02 | Yes |

| GPTZero | Moderate | Low | Partial |

Key takeaway: A clean detection report does not mean your work is original, and a flagged report does not mean you cheated. Both false positives and false negatives are common.

Practical implications for students and faculty:

The gap between AI vs human content is narrowing in ways that make detection increasingly unreliable. Institutions that rely solely on these tools are building policy on a shaky foundation.

Even when you use AI ethically, there are real costs to leaning on it too heavily. The most serious ones are not about getting caught. They are about what you lose.

Overreliance on AI risks producing generic content and eroding the writing skills that academic work is designed to build. If AI is always cleaning up your sentences, you never learn to write them well. If AI is always suggesting your structure, you never develop the judgment to build an argument from scratch.

Research also shows that AI boosts text quality in measurable ways, improving coherence and vocabulary range, but at the cost of reduced metacognition. That means students who rely heavily on AI think less critically about their own writing process, which is the opposite of what academic training is for.

Common mistakes and hidden costs of overreliance:

Pro Tip: Set a rule for yourself: write every first draft without AI. Use AI only in revision. This one habit keeps your skills sharp and your ideas genuinely your own.

The challenges with AI tools are real, and so is AI's impact on quality. The goal is not to avoid AI entirely. It is to stay in control of your own intellectual process while using it.

"Human-in-the-loop" sounds like jargon, but it describes something genuinely important. When you stay actively involved in every step of your writing, AI becomes a mirror that reflects your ideas more clearly, not a ghostwriter that replaces them. That distinction is what separates students who grow from students who stagnate.

The uncomfortable truth is that AI does not cause integrity problems. Shortcuts do. Students who would have copied from a website in 2015 are now pasting AI output in 2026. The tool changed. The behavior did not. Transparency and verification are not just policy requirements. They are the habits that protect you long-term, because they force you to own your work.

Faculty and institutions also need to move past the idea that detection tools solve the problem. They do not. What works is building assignments that require genuine thinking, rewarding process documentation, and treating AI in education as a skill to teach, not a threat to eliminate. Students who learn to use AI critically will be better researchers, better writers, and better professionals.

Using AI responsibly in academic writing is easier when your tools are built for it from the start.

Samwell.ai is designed specifically for students and academic professionals who want the efficiency of AI without compromising their integrity. The platform supports ethical workflows with features like guided essay structures, a Power Editor for targeted revisions, real-time AI detection checks, and full compliance with APA and MLA citation standards. Over 1,000,000 students from leading universities already use it to write smarter, not just faster. If you want to stay current on how AI is reshaping academic writing, the AI writing trends resource is a strong next step. Samwell.ai helps you stay ahead without cutting corners.

Most universities permit limited, transparent AI use for tasks like grammar checks or brainstorming, but require full disclosure for any substantive content. Policies vary, so always check your institution's specific guidelines before using any tool.

Detection accuracy is moderate at best, with Turnitin at 61% and Originality.ai at 69%, and both tools struggle significantly with hybrid texts and longer documents. Non-native English writers also face a higher risk of false positives.

Brainstorming, outlining, grammar suggestions, and citation formatting are typically acceptable uses, while generating full paragraphs or sections of content is not. Always verify any AI output before including it in your work.

Overreliance risks include loss of writing skills, generic content, reduced critical thinking, and potential plagiarism violations. Maintaining human oversight at every stage is the most effective safeguard.